Outcomes vs. Outputs: What's the Difference and Why Does It Matter?

Audio version ($) - This link will only work for paid subscribers.

Outcomes over outputs—everyone knows the mantra. But putting it into practice can be deceptively hard.

It's relatively easy to understand the concept. We should focus on the impact of what we build rather than just shipping features that people may or may not want. But it's rarely so black and white.

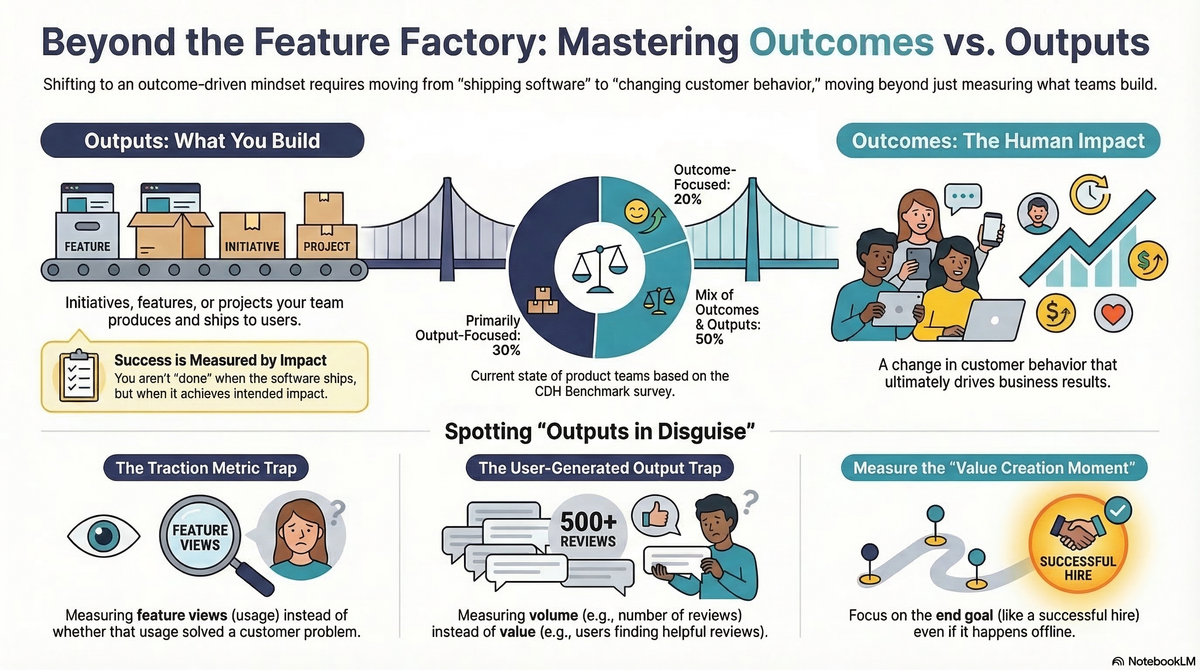

While many teams are being asked to drive outcomes, those same teams are also being asked to deliver specific outputs. In our CDH Benchmark survey, 20% of product teams claim to be outcome-focused, nearly half describe themselves as working in a mix of outcomes and outputs, and about 30% are still primarily working with outputs.

Today, we are going to look at why this is. We'll get specific about the difference between outcomes and outputs. We'll walk through several examples—many of which will expose why many outcomes are really outputs in disguise. We'll explore some of the most common reasons why shifting from outputs to outcomes can be so challenging. And I'll provide some actionable tips to help you define better outcomes.

Finally, for paid subscribers, you'll be able to put your outcome to the test and get feedback from our new Outcome Coach.

Simple Definitions: Outcomes vs. Outputs

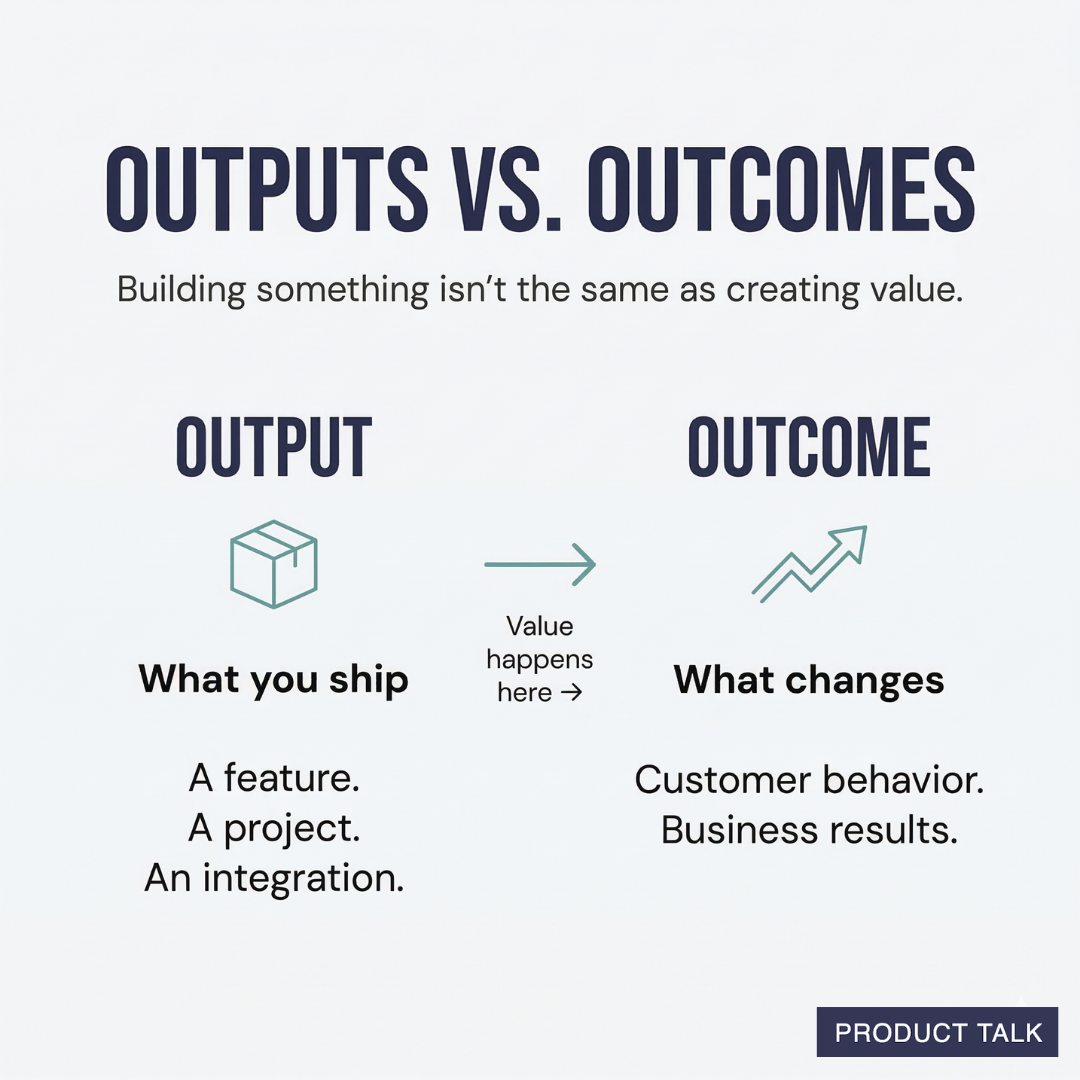

An output is something you build or produce—a feature, a project, an initiative. It's something your team ships.

An outcome is the impact of that output—a change in customer behavior or a business result.

Josh Seiden puts it well in his book Outcomes Over Output: "An outcome is a change in human behavior that drives business results."

I distinguish business outcomes from product outcomes. Business outcomes are typically financial metrics that measure the health of the business (e.g. increase revenue or reduce costs) while product outcomes measure a customer behavior in the product or a sentiment about the product.

Here's a simple example. Many B2B companies support a number of integrations. Integrations are outputs. Having integrations alone doesn't create value. Customers using and finding value in those integrations—that's an outcome. If those customers retain their subscriptions longer because of the integrations—that's also an outcome.

Building something isn't the same as creating value. That's the core of this distinction.

Why This Matters: Outcome-Driven Teams vs. Output-Driven Teams

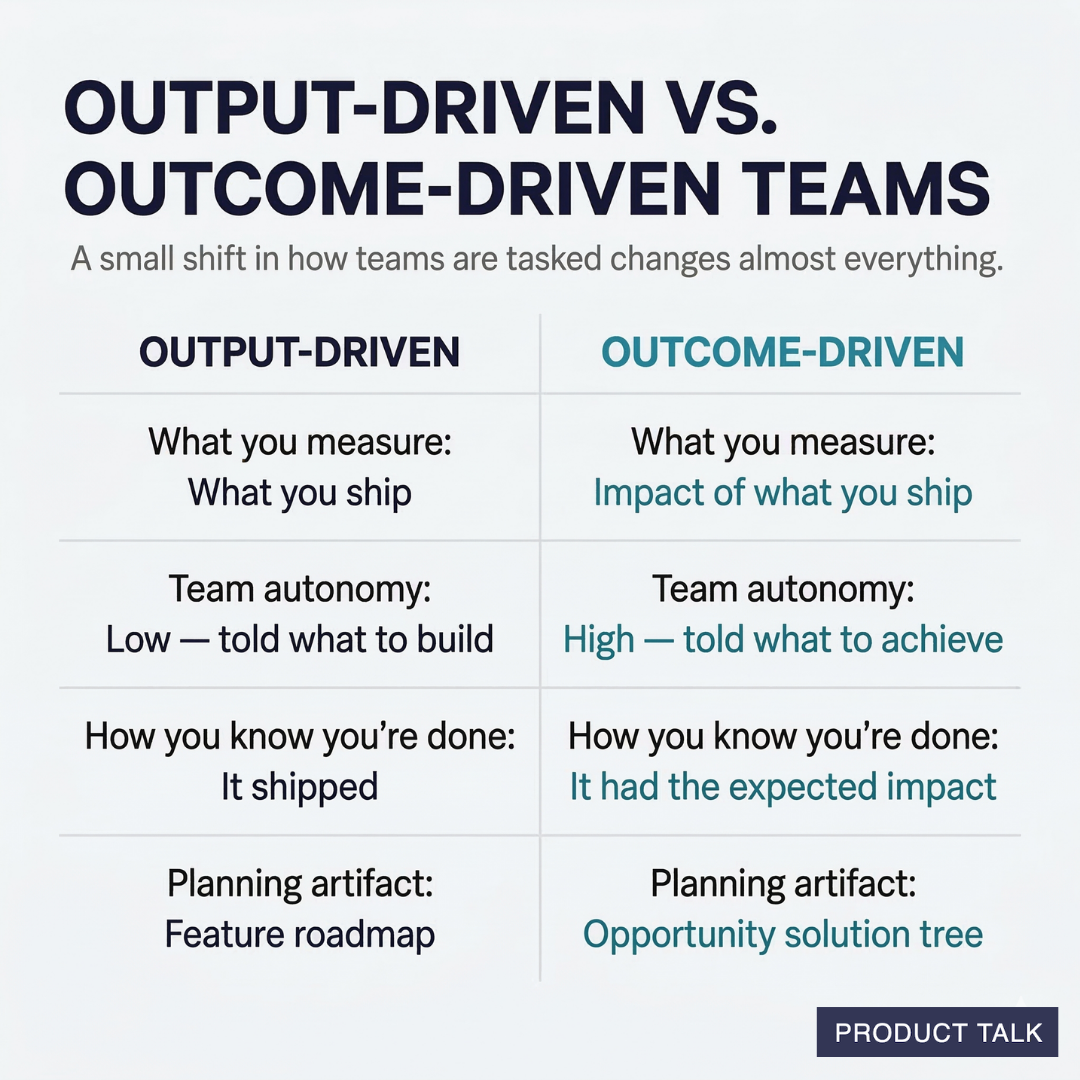

When we task teams with delivering outputs, they're done when the software ships. When we task teams with delivering outcomes, they aren't done until the software ships and has the expected impact.

That might sound like a small difference, but it changes almost everything about how a team works.

| Outputs | Outcomes | |

|---|---|---|

| What you measure | What you ship | The impact of what you ship |

| Team autonomy | Low—told what to build | High—told what to achieve |

| How you know you're done | It shipped | It had the expected impact |

| Planning artifact | Feature roadmap | Opportunity solution tree |

When teams are given outputs to deliver, they have very little latitude to explore the best solution. Their job is to execute a plan someone else made. When teams are given outcomes, they have the freedom—and the responsibility—to figure out the best path to to success. This is what opens the door for real product discovery.

Outcomes vs. Outputs: Examples

The best way to understand this distinction is through examples. I'm going to walk through several, starting with a clear-cut example and then moving to the trickier cases—the ones that trip up even experienced teams.

Clear-Cut Example

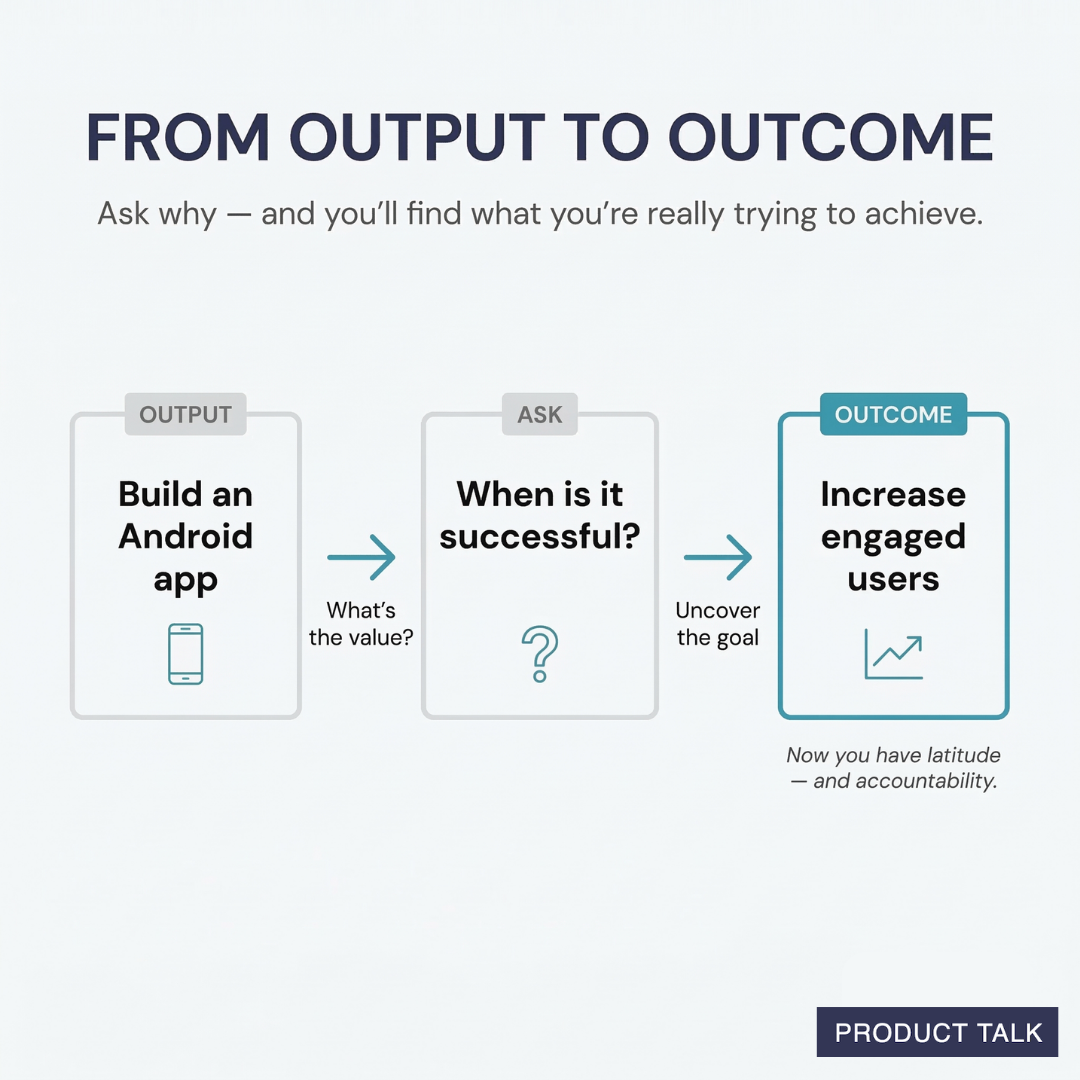

"Our outcome is to deliver an Android app."

An Android app is something we build and ship. It's clearly an output.

To get to an outcome, we might ask, "What's the value of having an Android app?" Or, "How do we know when the Android app is successful?"

We might answer: "Having an Android app will allow us to engage more users. We'll know it's successful when people engage with the app on a regular basis."

This answer helps us uncover the hidden outcome: engage more people. We now want to ask, "Is our goal to engage any type of customer or are mobile customers particularly valuable?" or "Are Android users particularly valuable?"

This allows us to set an outcome at the right scope. We might choose any one of the following:

- Increase the percentage of engaged users across any platform.

- Increase the percentage of engaged mobile users.

- Increase the percentage of engaged Android users.

Regardless of the scope we set, any of these outcomes gives us more latitude to explore than simply building an Android app. Maybe we don't need an Android app at all. We might be able to engage Android users through a mobile-friendly website or through their inbox.

It also holds us accountable. We aren't done when we build an Android app. We are done when we have engaged the right people.

Tricky Examples: Measuring the Wrong Outcomes

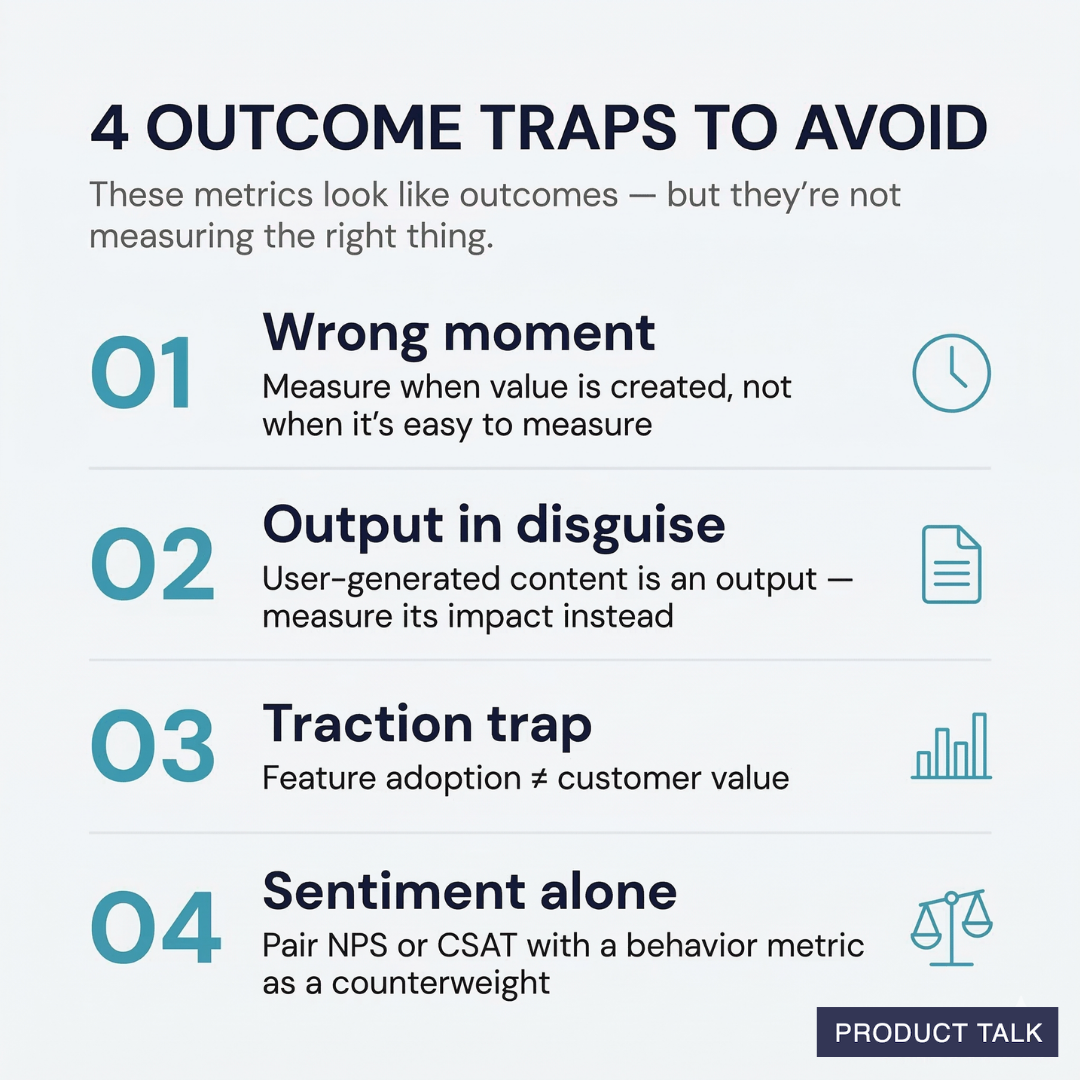

Let's look at a few examples that often fool experienced teams. They look like outcomes—they're metrics, they're measurable—but they're not actually measuring impact.

Measure the value creation moment: Hires, not applicants

When setting outcomes, it can be easy to focus on the metric that is easiest to measure. But a good outcome measures the value a customer gets from using your product.

I worked at a company that helped new college grads find their first job. When I started working there, the primary outcome was "increase job applications." This technically is an outcome—it measures a specific behavior in the product.

But it doesn't measure the value creation moment. A job seeker doesn't get value when they apply for a job. They only get value when they get the job. Similarly, employers don't get value from any job applicant, they get value when the right job applicant applies.

Many job boards try to measure qualified applicants—instead of counting any applicant, they compare the credentials of the applicant to the job description and only count qualified applicants. This is better. But it still doesn't measure the value creation moment. Both the job seeker and the employer get value when an open job is successfully filled. The right metric is hires.

Now there are a lot of systemic reasons for why hires are hard to measure. The value creation moment doesn't happen on the website. It happens much later—after an interview, after an offer, once the offer is accepted. Job seekers who recently got a job no longer need the service and aren't likely to return to close the loop. Employers are afraid to tell job boards when they hire someone, as this gives the job board leverage to raise prices. It's definitely not an easy metric to measure.

But the easy-to-measure metric isn't always the right outcome. Push to measure the value creation moment even if it happens outside of your service.

Measure impact, not user-generated output: The course reviews trap

I worked with a team that helped students choose university courses. They set their outcome as: "Increase the number of course reviews on our platform."

Sounds like an outcome, right? It's a metric. You can measure it. It's an action users take on the site—writing a review. But it's actually an output in disguise.

Reviews are valuable when they help a student evaluate a course. They don't create any value if a student never sees them. More reviews aren't always better. What if all of the reviews were clustered on course pages that nobody ever saw?

Reviews are output. In this case, they are outputs generated by our users. That output can create value, but only if other users see them and benefit from them.

A better outcome is "Increase the number of course views that include reviews."

See the difference? The first measures what your users produce (reviews). The second measures the impact on the customer's experience (seeing courses with reviews when they're making decisions).

To test whether something is an output or an outcome, question the impact it will have. If you can achieve the metric without actually helping customers, it's probably an output in disguise.

Measure success, not just adoption of features: The traction metric trap

"Increase the percentage of users who viewed the performance report."

This looks like a good outcome. It measures a specific behavior in the product. It's within the team's control. But it's what I call a traction metric—it measures adoption of a single feature, not value to the customer.

There are two issues with using traction metrics as your primary outcome. First, many people could view your performance report, but not find what they need. This metric measures usage, but not whether that usage was successful.

Second, we might have perfectly happy customers who don't need the performance report. If that's true, the last thing we want to do is drive people to use features they don't want or need.

When we measure the value creation moment, rather than feature adoption, we measure what customers want, not what we want them to do.

Pair sentiment metrics with behavior metrics

I define a product outcome as a metric that measures either 1. a specific behavior in the product or 2. a sentiment about the product. But sentiment metrics—like CSAT or NPS—can be tricky on their own.

Sentiment metrics are outcomes, but they aren't directional. They don't tell us where to explore. Nor do they set any guardrails of where we shouldn't explore. As a result, we don't know where to focus.

Instead of choosing a sentiment metric alone, I like to pair a sentiment metric with a behavior: "Increase engagement without negatively impacting satisfaction." I like to use sentiment metrics as counterweights.

Facebook and Instagram illustrate why this can be valuable. Meta is exceptional at driving engagement—but to a fault. Many of us don't like these addictive products. Instead of focusing on engagement at all costs, they could have benefited from pairing engagement with a sentiment metric—only increase engagement to the level where satisfaction isn't negatively impacted.

Why Getting This Right Is So Hard

If the distinction between outcomes and outputs were easy, everyone would have made the shift by now. But there are real structural reasons why teams struggle.

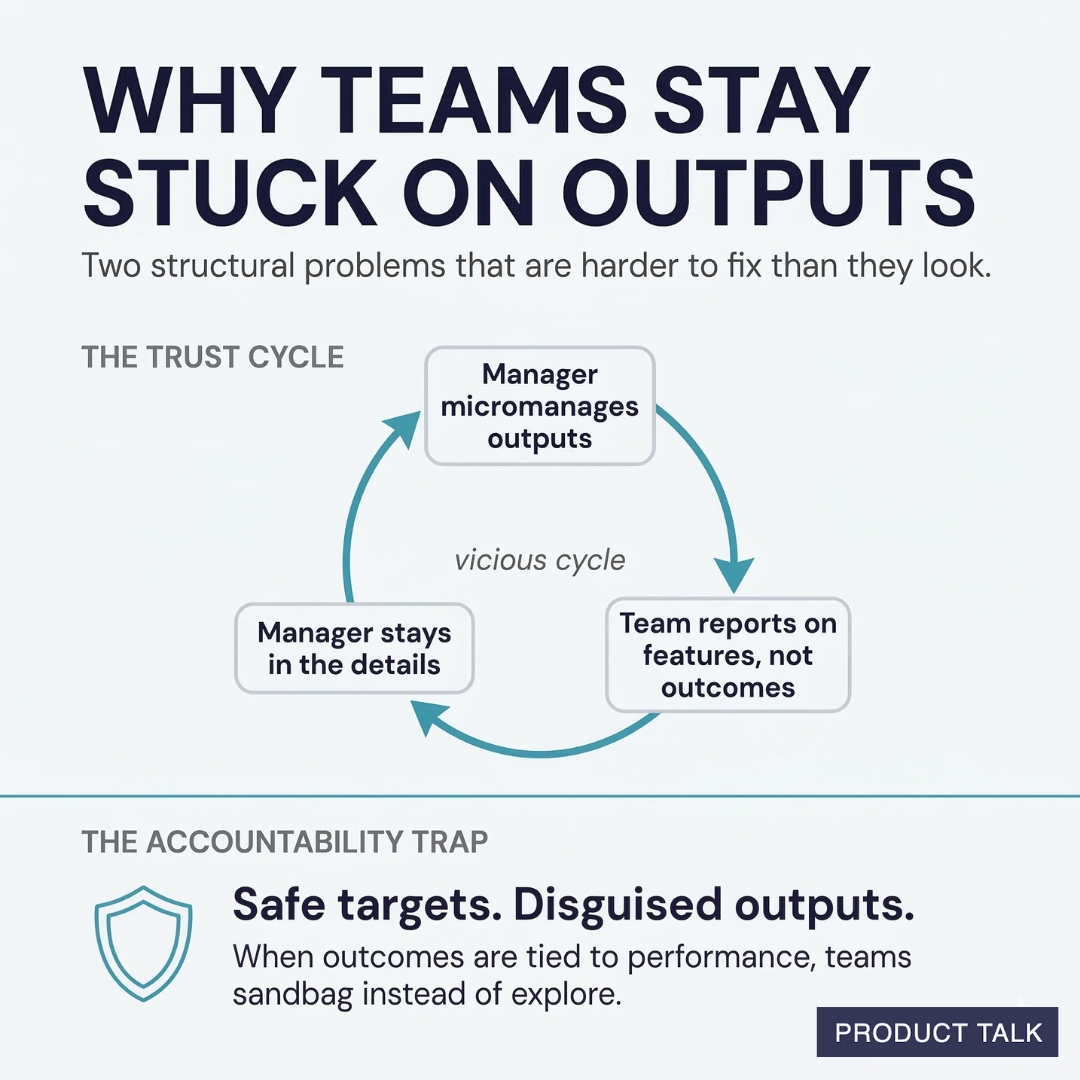

The trust cycle. Managers don't trust that teams can reach outcomes on their own. So managers micromanage the outputs. Teams, in turn, don't communicate their progress toward outcomes—they communicate their progress on features. This reinforces the manager's belief that they need to stay involved in the details. It's a vicious cycle.

Breaking it requires work on both sides. Teams need to learn how to show their work—not just present conclusions, but walk stakeholders through the thinking that led to those conclusions. And managers need to learn how to give feedback on the thinking, not just react to the solutions.

The accountability trap. When performance reviews are tied to hitting outcomes, teams play it safe. They sandbag their targets. They disguise outputs as outcomes so they can guarantee "success." This defeats the whole purpose.

I recommend treating outcomes as learning opportunities, especially at first. When a team is working on a new outcome, set a learning goal—"learn what moves the needle on this metric"—before setting a performance goal—"increase X by Y%." This gives teams space to explore without the pressure to deliver immediate results.

How to Get Started with Better Outcomes

If you want to make the shift from outputs to outcomes, here are a few practical steps.

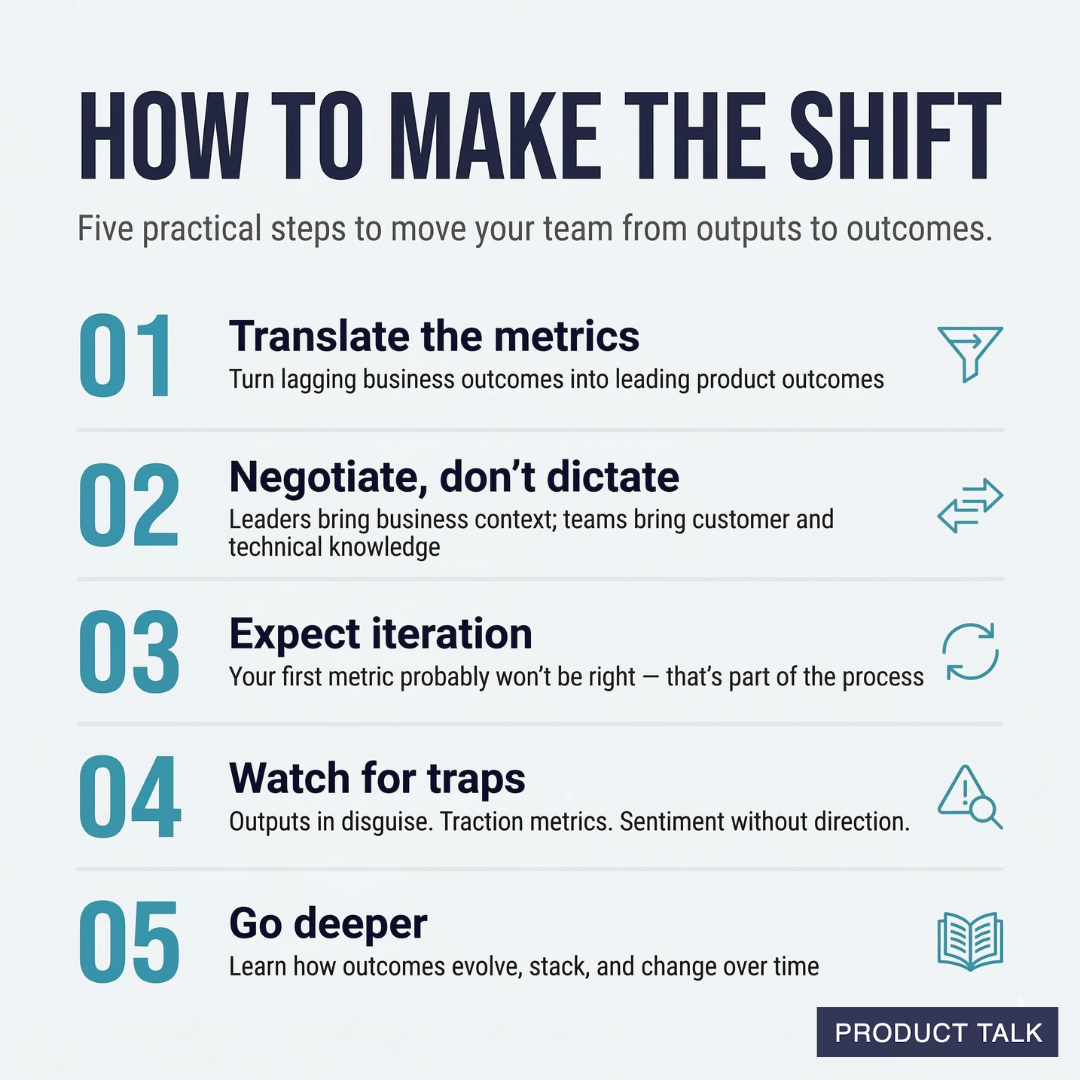

Translate business outcomes to product outcomes. Business outcomes like revenue, retention, and market share are lagging indicators—by the time you see them, it's too late to act. Product outcomes measure behavior changes within the product that lead to those business results. They're leading indicators within the team's control. (Learn how to translate business outcomes to product outcomes.)

Negotiate outcomes with your team. Setting outcomes should be a two-way conversation. The leader brings the across-the-business view of what the business needs. The team brings customer and technology knowledge, and communicates how much they believe they can move the metric. Neither side should dictate. (Read more about this negotiation.)

Expect to iterate on your metrics. Your first outcome metric probably won't be right. That's normal. Sonja at tails.com went through four iterations—from 90-day retention to 30-day to 5-day to behavior-based metrics—before landing on something actionable. Thomas at Bluestone Analytics iterated three or four times before finding the right metric. This is part of the process, not a failure.

Watch for common mistakes. Outputs disguised as outcomes. Traction metrics masquerading as product outcomes. Sentiment metrics without direction. Business outcomes assigned directly to product teams. Hope Gurion and I shared the most common mistakes in this video.

Go deeper. For a comprehensive overview of how outcomes work in continuous discovery—including how long to spend on an outcome, how many outcomes a team can work on at once, and how outcomes change over time—see my full guide to shifting from outputs to outcomes.

And if your company uses Objectives and Key Results (OKRs), be sure to check out my OKRs vs. Outcomes article.

The Bottom Line

When we shift from an output-first mindset to an outcome-first mindset, it doesn't mean that outputs stop mattering. Product teams will always ship features. And the ability to do so quickly while maintaining quality is critical. This shift is about making sure those features have the intended impact. We aren't done when we ship. We are done when what we shipped has the intended impact.

When you measure success by what you ship, you get a feature factory. When you measure success by the impact of what you ship, you get a product team that learns, adapts, and creates real value.

Put Your Outcome to the Test: Get Feedback from Our Outcome Coach

Enter your team's outcome below and get feedback on whether it's a true outcome, an output in disguise, a traction metric, or something else—along with suggestions for how to improve it.

Product Talk is a reader-supported publication. To access the Outcome Coach below, upgrade to a Supporting Membership or a CDH Membership.