Have you heard? My new book Continuous Discovery Habits is now available. Get the product trio's guide to a structured and sustainable approach to continuous discovery.

Have you heard? My new book Continuous Discovery Habits is now available. Get the product trio's guide to a structured and sustainable approach to continuous discovery. Have you ran an experiment in the past day, week, month?

Can you clearly articulate your hypothesis?

Do you know under which conditions your hypothesis will pass or fail?

Do you know how to interpret the data in a way that drives successful product decisions?

Many of us are new to hypothesis testing. Tools like Optimizely, Visual Website Optimizer, and UserTesting.com make it easy to jump right in and get started.

But if we don’t take a step back and look at our hypothesis and experiment design, we are unlikely to get actionable data from our efforts.

In this post, I outlined the 14 most common mistakes people make when getting started with hypothesis testing. Today, we are going to take a look at one of the most common ones: Not knowing what you want to learn.

I’ve written before about why you shouldn’t test everything and anything. It not only takes too much time, you are also unlikely to get good results from it. You’ll frustrate your team and risk releasing change after change that has no impact.

Instead, you should always start with a clear goal.

Ask yourself, “What am I trying to learn and how can I design an experiment to learn that?” – Tweet This

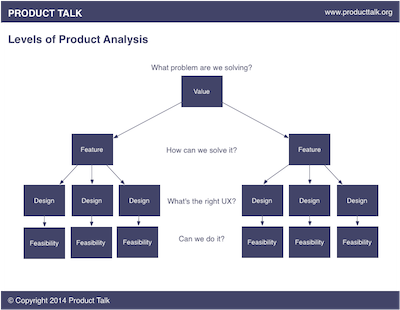

In the above video, I walk through four levels of product analysis. You can (and should) run experiments at each level. They include:

- Value: What problem are you solving?

- Features: How might you solve that problem?

- Design: What is the right design for this solution?

- Feasibility: Is this solution feasible?

Watch the video or read the full transcript below.

Full Transcript

As we develop product ideas, we want to start by understanding:

- What problem we are solving or what value we can deliver.

- How we might solve that problem with different features.

- What the right design for each feature might be.

- And whether or not the specific design is technically feasible.

We can run experiments to test at each level of product analysis.

Too often, we jump right into testing a feature or the design of a specific feature before we know whether or not that feature or design creates value. The problem with this approach is it can lead to wasted effort and misunderstanding experiment results.

Let’s look at an example.

Suppose you are working on an eCommerce site and you find out that shoppers are having a hard time finding specific products. You decide you want to expand the set of search filters that you offer. Since you have an experimental mindset, you decide to A/B test this change.

Your hypothesis might be:

By adding more search filters, shoppers will find specific products faster.

To make this a good hypothesis, we need to get more specific:

By adding more search filters, 30% more shoppers will find specific products within 1 minute of searching.

If you want to learn more about how to define a good hypotheses, please see the video: The 5 Components of a Good Hypothesis

Next we need to ask ourselves:

What will we do if this hypothesis passes, fails, or has no impact.

If the hypothesis passes, it’s a no brainer, we want to release this change.

But if the hypothesis fails or has no impact, what should we do?

You might conclude that people don’t want search filters or that they simply don’t work. But this conclusion is too broad.

It’s possible that we have the wrong design. There are many ways to implement search filters. Some sites show them across the top, some on the left rail, others on the right rail. Some sites use checkboxes. Others use links. Each of these design decisions may impact whether or not the hypothesis passes or fails.

But we don’t want to charge off and test every design. There are countless design alternatives and we don’t want to test anything and everything.

Instead, we want to move up the levels of product analysis. We want to ask ourselves, is this the right feature?

There are other ways that we can help shoppers get to specific products faster. For example, we could implement product recommendations. We could improve our search relevance. We could offer a daily deal.

And sometimes we might need to move up even one more level and ask ourselves, do shoppers even want to get to specific products faster? Maybe they enjoy the hunt. Maybe browsing is fun.

With each test we run, we need to be cognizant of what level of product analysis we are working at.

Are we verifying that a problem exists and / or testing a specific value proposition?

Are we testing a feature that can deliver on that value proposition or solve that problem?

Are we testing a specific design that implements that feature?

Or are we testing the technical feasibility of a design or feature?

Knowing which level we are testing helps us to draw better conclusions from our experiments.

Are you interested in learning more. Please visit the Hypothesis Testing resource page.